Success Story: A POP proof-of-concept allows a Bunsen flame use case from EXCELLERAT to run two times faster

Success story # Highlights:

- Keywords:

- Performance optimisation

- Assessment

- PoC

- MPI

- DLB

- Load imbalance

- Industry sector: Engineering

- Key codes used: Alya

Organisations & Codes Involved:

- The performance assessment, that consists in a performance analysis that identifies the main bottlenecks and scalability issues and includes suggestions to address them.

- The proof-of-concept is a demonstration by the POP experts on some of the suggestions provided in the performance assessment, usually consists on small code changes and an evaluation of the implementation.

The organisation involved: BSC: Barcelona Supercomputing Center (BSC) is the national supercomputing centre in Spain. BSC specialises in High Performance Computing (HPC) and manages MareNostrum IV, one of the most powerful supercomputers in Europe. BSC is at the service of the international scientific community and of industry that requires HPC resources. The Computing Applications for Science and Engineering (CASE) Department from BSC is involved in this application providing the application case and the simulation code for this demonstrator. The code involved: Alya: The code used for this use case is the high performance computational mechanics code Alya from BSC designed to solve complex coupled multi-physics / multi-scale / multi-domain problems from the engineering realm. Alya was specially designed for massively parallel supercomputers, and the parallelisation embraces four levels of the computer hierarchy. 1) A sub-structuring technique with MPI (Message Passing Interface) as the message passing library is used for distributed memory supercomputers. 2) At the node level, both loop and task parallelisms are considered using OpenMP (Open Multi-Processing) as an alternative to MPI. Dynamic load balance techniques have been introduced as well to better exploit computational resources at the node level. 3) At the CPU level, some kernels are also designed to enable vectorization. 4) Finally, accelerators like GPU are also exploited through OpenACC pragmas or with CUDA to further enhance the performance of the code on heterogeneous computers. Alya is one of the only two CFD codes of the Unified European Applications Benchmark Suite (UEBAS) as well as the Accelerator benchmark suite of PRACE.

Topics of collaboration:

A POP performance assessment has been performed on an EXCELLERAT use case solved with Alya that simulates a Bunsen flame. The POP assessment unveiled that the main factor limiting scalability for this use case is the load imbalance. The load imbalance issue is inherent to the problem that is being solved, because the chemical reactions only happen and are thus computed in a small section of the domain. Moreover, where the chemical will happen cannot be computed beforehand, therefore the domain partition cannot be adjusted to minimise the imbalance. One of the suggestions included in the performance assessment is the use of the DLB (Dynamic Load Balancing) library to address the load imbalance issue, the potential of this approach has been shown in a POP proof-of-concept.

Results of collaboration:

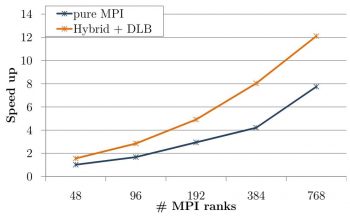

The obtained performance, thanks to the POP experts recommendation to use the DLB library, was evaluated up to 768 MPI ranks (16 Marenostrum4 nodes). Figure 1 shows the speedup obtained with respect to the original run with 48 MPI ranks (1 Marenostrum4 node). With the DLB library the use case runs two times faster independently of the number of MPI processes up to 768 MPI ranks.

What did we manage to do together that we could not have done separately:

This collaboration brings together combustion experts that provide a production use case representative of a common issue in combustion simulations and POP experts that offer their performance and optimisation knowledge to achieve better efficiency.

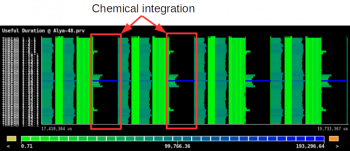

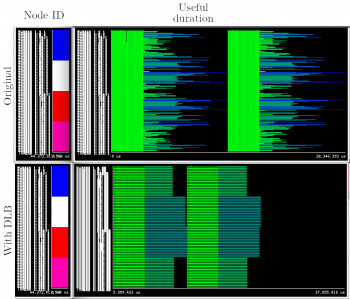

The main issue in this use case was the load balance: the Figure 2 shows the useful duration of a trace with 48 MPI ranks of four steps of the bunsen flame use case. The red squares indicates the chemical integration phase, where is clearly visible the load imbalance.

The proposal to address this issue, agreed on by POP and EXCELLERAT, was to use the DLB library, as DLB is able to solve load imbalances that cannot be predicted beforehand and that can change during the execution.

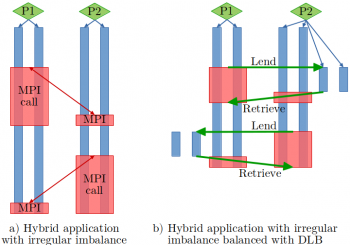

DLB uses the second level of parallelism (shared memory level) to solve the load imbalance at the MPI level. This is done spawning more threads to the more loaded processes when the less loaded processes reach a blocking MPI call. The Figure 3 shows an example of a hybrid application with load imbalance (left) launched with 2 MPI processes and 2 OpenMP threads each. In the right hand side, we can see the same application with DLB. We can observe when MPI process 1 reaches a blocking MPI call, it lends its threads to the MPI process 2. At this point MPI process 2 can use four threads to finish its computation.

The suggestion to use DLB to solve the load imbalance has been implemented within a POP proof-of-concept (PoC). In this PoC, POP has parallelised with a shared memory programming model the part of the code that suffers from load imbalance. Then, the load balancing has been enabled with DLB by adding a call to the API (Application Programming Interface).

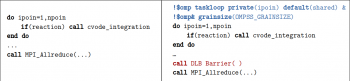

In Listing 1, there is the original code (left) and the modified code (right) to enable load balancing with DLB. In blue, we can see the code added to parallelize with OmpSs and in red the code necessary to enable DLB in this section of the code.

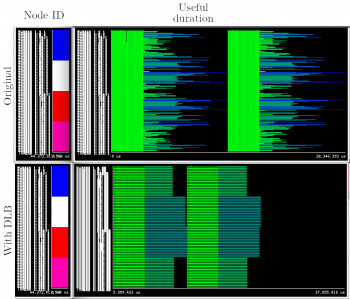

Figure 4 shows two traces comparing the original execution (top) with 192 MPI processes and the same execution with DLB (bottom). This highlights that the DLB execution is able to load balance the MPI processes inside the same node, running two times faster than the original code.

PRODUCTS/SERVICES:

- DLB Library (Dynamic Load Balancing) -currently embedded also in Alya as a demonstrator. Type of licence of the DLB library: LGPL3.

- Best practise guidelines for scientific purposes.

- Joint collaborative research (Academia, R&D Centers)

- Research contracts (industry).

UNIQUE VALUE:

- Solve load imbalances that cannot be predicted beforehand and that can change during the simulation execution.

If you have any questions related to this success story, please register on our Service Portal and send a request on the “my projects” page.