White Paper: Empowering Large-Scale Turbulent Flow Simulations With UQ Techniques

An effective, robust simulation must account for potential sources of uncertainty. Computational fluid dynamics (CFD), in particular, has to deal with many uncertainties from various sources. The real world, after all, forces many kinds of uncertainties upon engineering components – everything from changes in numerical and computational parameters to uncertainty in initial and boundary conditions and geometry. No matter how expensive a flow simulation is, the uncertainties have to be assessed. In CFD, uncertainty is inevitable. But it presents us with a question: how do you know which uncertainties to expect and quantify without using an enormous amount of computing power?

UQit – an open-source Python package – is the answer. It has been developed specially for CFD; a dependable, flexible and cost-effective package for testing various flow simulations (and even experiments). It does this by propagating uncertainty into the inputs and parameters themselves, making their eventual outcomes much more illuminating. With UQit, you will assess a significant amount of reliable data while addressing the sensitivity of the variables at play. You will not only learn what influences your model, but how much influence a variable has. CFD therefore becomes far more reliable with respect to real-world conditions.

However, certain frameworks are required for large flow simulations. UQit techniques are all interrelated but demand attention at particular stages of the process to overcome simulation cost limitations, such as those for wall-bounded turbulent flows. With the right frameworks, you’ll set the appropriate parameters, measure uncertainties, evaluate sensitivity and change conditions easily for future tests. This is especially important when we consider that UQit itself will keep developing to stay tuned with the state-of-the-art techniques. Your organization must be ready to maximize UQit’s potential for increasingly accurate models, saving more time, cost, risk and questions as engineering projects come together.

Here, we will discuss UQit in detail, breaking down its transformative role within uncertainty quantification (UQ). You will learn about its benefits, functionality and application. Then we’ll examine the frameworks necessary for UQit to deliver the results you want to see. This information will be illustrated visually, as well with a case study (see the in-situ analysis). Finally, we’ll summarize and offer guidance for getting started with UQit, raising your potential in computational physics.

No matter how accurate a CFD model is believed to be, any data gleaned from it can be sensitive to the variation of different parameters, inputs, etc. In UQ, we target the assessment of the uncertainties in the quantities of interest (QoIs) based on the CFD outcome. Note that UQ techniques can be in close relation with verification and validation (V&V).

What is a quantity of interest?

QoIs are the result of a simulation when it goes through a mathematical model affected by uncertainty. QoIs are measurable values in a simulation that mimic the laws of science and nature as closely as possible. By using a ‘reference value’ – computing the model’s digital reality so it resembles our reality – you gain a litmus for reliable data.

In so-called computer experiments, QoIs are measured after tweaking the simulation’s parameters: when they change, they affect the system you are analyzing. Assessing uncertainty and sensitivity with changing conditions reveals how your system is likely to function: a vital process for mechanical, structural, marine, energy and other engineering disciplines.

How does uncertainty arise?

Even with the most advanced mathematical models and adherence to fixed parameter values, uncertainties may still come into play, contaminating your results.

- Your initial data may be flawed. There’s no guarantee that the information within your simulated flow is completely accurate.

- Perhaps you have set poor boundary conditions – the limits that a simulated fluid, gas or structure may have when it interacts with its environment. It’s easy to make a geometric mistake or admit knowledge gaps for length, height, weight, volume, etc. Don’t forget that in CFD, smooth geometries are discretized with a set of finite segments

- One tiny error can change the rest of the model in unexpected ways. These minor miscalculations are tough to find and fix.

- In turbulence simulations various statistics are among QoIs. You only have finite time for averaging – which leads to uncertainty in the computed statistics.

- Bugs in the code can wreak havoc with modeling. Unless you perform regular bug sweeps, you may not realize they are undoing great work elsewhere.

In CFD, there are two types of uncertainties:

Aleatoric: Inherent uncertainties in your model. These cannot be avoided or removed. They can be the unknown effects of the parameters you will change.

Epistemic: Avoidable uncertainties brought about by shaky modeling or insufficient data – the problems we’ve just described.

Uncertainty quantification, then, is a series of techniques for understanding and quantifying epistemic uncertainties that are influencing your system, as well as measuring the aleatoric effects a good model will produce. You account for more complexity while spotting the factors you can refine for a robust data set.

The challenge of high-fidelity simulations

At first, you may think that a detailed, deterministic simulation – with every possible parameter set in advance – is the key to measuring trustworthy QoIs. A direct numerical simulation (DNS) can lead to the most detailed, accurate results. However, there’s a major roadblock for many organizations: cost and computing power. At high Reynolds numbers, DNS is prohibitively expensive and in many cases impossible.

Other high-fidelity alternatives such as large eddy simulations (LES) use filters to remove small-scale turbulence. They are potentially very precise, resolving turbulent flow structures over several spatial and temporal length scales. The problem is, they still require a huge amount of computational power and they introduce more sources of uncertainty compared to DNS. Other cost-effective approaches such as RANS (Reynolds-averaged Navier-Stokes) simulations rely more on modeling and hence introducing more uncertainties. To combine the data of these, you need much more advanced UQ methods to set everything in motion.

As one of the first open-source Python packages for UQ in CFD, UQit is uniquely flexible in terms of usage and extension. It can quantify uncertainties using manageable computational resources, and for some analyses even without any disruption to a flow simulation (in-situ estimation of time-averaging uncertainties).

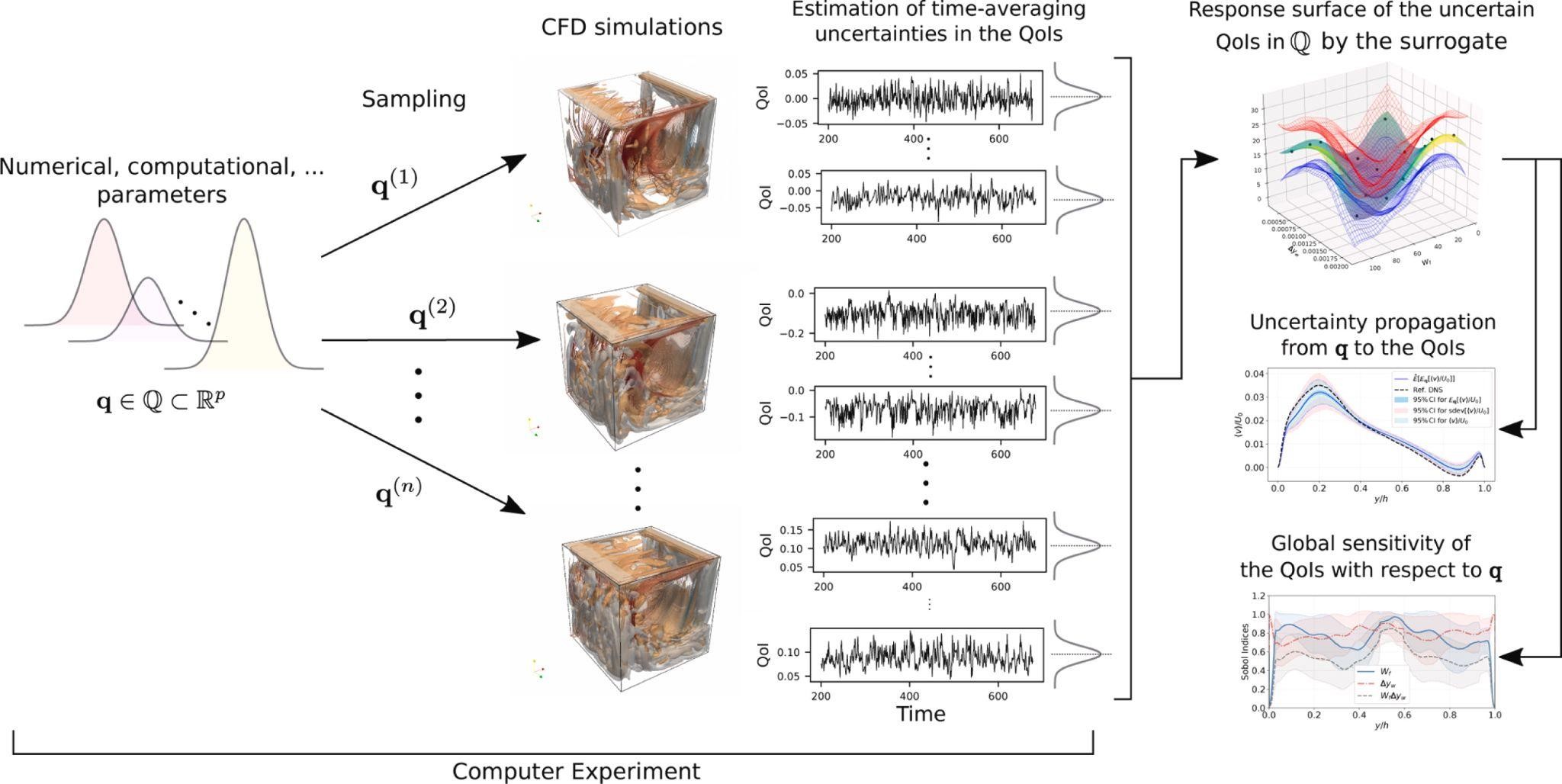

UQit relies on a basic principle: divide the inputs and parameters into two groups. The first consists of physical, computational and numerical properties (e.g. mechanical and thermal states, geometrical uncertainties, or the spacing between grid points, etc). Any output associated with a combination of these parameters may be uncertain due to the second group (for example, finite time-averaging or set boundaries). The uncertain data is analyzed as a ‘surrogate’ – a small sample of the actual model, scaling up to represent the whole set of simulations in a computer experiment.

NASA has already voiced the importance of having relevant UQ techniques in its roadmap to 2030. UQit is well-placed to take a role in this revolution for quantitative assessment, offering a freer, more manageable tool for verified and validated results.

But why? What makes it special?

Fig. 1. A schematic representation of some of the techniques implemented in UQit for combining uncertainties of different types, taken from this article.

Built for uncertainty

UQit mainly uses polynomial chaos expansion (PCE) to estimate how uncertainties in the inputs and parameters leave an effect on QoIs. There are many options to take samples, analyze them, and determine their broader influence within the simulation on any scale. It can also set the stage for gaussian process regression (GPR), creating a probability distribution for the surrogate across all possible values. As a novel technique, UQit combines standard PCE and GPR to create probabilistic PCE, which is suitable for simultaneous propagation of uncertainties from inputs/parameters and observational uncertainty.

A large module library

Currently, UQit has modules for a wide array of uncertainty quantification techniques. The modules can be used for quantification and propagation of input/parameter uncertainties, time-averaging uncertainties in flow statistics, time-series analysis, global sensitivity analysis (GSA), construction of surrogates, and more. Since it’s written in Python based on object-oriented programming, it can be further developed rapidly for various purposes. So, your team stands to benefit from continuous refinement and new modules as more people get to grips with it.

Real-time, large-scale analysis

UQit stands apart from other packages in another key respect: you can establish in-situ UQ. That means your model doesn’t have to grind to a halt while the quantitative investigation takes place. Think of it as treating your simulator as a ‘black box’, integrating seamlessly when desired. Most of UQit, however, remains independent of any CFD code; it only requires data from the flow solver (simulator).

Lower expenses

Considering the computational cost of high-fidelity CFD simulations, UQit takes elements of complex data and uses them to draw broader conclusions about QoIs and uncertainties. This is mainly achieved through constructing appropriate surrogates. You can perform a greater number of UQ tests without fearing you’re going over budget or sapping processing power.

Quantify sensitivity too

As we’ve mentioned, this coding package reveals the impact of uncertainties individually, as well as across the whole picture of your simulation. It helps you prioritize actions that will refine the model or adjust the parameters again for closer testing on a particular variable. Global sensitivity analysis makes UQit even more powerful.

Embracing UQit puts your organization firmly ahead of the curve for finding uncertainties you can test under many conditions. It will help quantify how robust, reliable and sensitive your CFD simulations are. Thanks to the increased accuracy and lower resources associated with UQit modeling, it is an easy sell to other project stakeholders. Max out what you have for large-scale turbulence flows – do more with less, and trust what you see.

Yet it’s important to remember that frameworks are essential for UQit to function as it should. Next, we’ll dive into some of the strategies and configurations that underpin CFD success.

Uncertainties exist by nature and design. This coding package can be used for estimating aspects of both. That means successive actions must set the plan in motion, adjusting techniques to fit whatever goal you’re trying to reach.

Surrogates

First of all, you must create a surrogate for the QoIs. It locates a regressor that approximates a function in parameter space based on a limited number of evaluations. The domain is split into an array of possible conditions – what the simulation does when certain parameters are laid in place. An algorithm then chooses new conditions from the pool at its disposal. Another test; another result is recorded. The process repeats until the regression model has been updated with all potential queries, sampling your flow simulation piece by piece.

There are several approaches to constructing a surrogate, depending on your focus. UQit has a slew of options for doing so. For example, you might want to craft a surrogate by PCE or GPR:

PCE

Polynomial chaos expansion is best for assessing uncertainty from inputs/parameters. A mean score between statistical input distributions provides a view of how the model varies when those parameters change. You get the mean, variance and higher moments from a PCE. UQit provides various options for truncating the PCE summation which can be applied to different sampling schemes.

GPR

Gaussian process regression models any finite observations as they are sampled from a multivariate normal distribution. It emphasizes changes within the covariance function i.e. how one sample influences another sample. Crucially, GPR is an interpolant, but lends higher accuracy to input data with a general noise structure. The latter feature is unique to GPs.

GSA

With the requisite data from surrogate testing, you may want to uncover parameters that have the most effect on the model’s output. Sensitivity is considered ‘global’ when you apportion every input uncertainty versus output uncertainty over their range of interest. The effects are evaluated and plain to see.

UQit is formidable for GSA because it can pave the way for an in-situ model at reasonable computational cost. It makes future testing even more useful; you can focus on CVs with higher GSA ratings, or manipulate lower-scoring variables more until they show larger influence on the simulation.

Implementing the technique

Once you’ve defined the type of UQ assessment that’s most useful, you can let UQit run on the CFD simulation data. Again, UQit is an open-source with mostly non-intrusive techniques that leave the CFD solver untouched. Appropriate interfaces can link UQit to the CFD data.

- PCE and Sobol indices involve standard libraries like SciPy and NumPy. For compressed sensing – utilized when higher-order PCEs are constructed from a few samples – we fall back on the CVXPy library.

- GPR, on the other hand, functions with GPyTorch. It’s a powerful way to discover how model parameters fit with predicted and actual outputs.

In-situ setup

CFD simulation in general and large-scale, high-fidelity turbulence simulations are ripe for in-situ UQ. However, the installation process isn’t quite as straightforward, because the simulation code must be compiled with an in-situ interface such as VTK-Catalyst. This interface transfers data over to UQit so simultaneous analysis can begin.

Normally, time-series data from turbulent flows is autocorrelated, making it tough to assess the uncertainty in derived, time-averaged quantities. In-situ UQ deals with vast amounts of that information and estimates the uncertainty in the flow statistics (due to finite time-averaging) on the fly.

We describe in-situ setup in our success story, but a HPC provider should be able to take care of it for you. In future, the in-situ framework will be extended to other UQ analyses offered by UQit.

The European Center of Excellence for Engineering Applications (EXCELLERAT) is a major knowledge hub for CFD in the automotive, manufacturing and aerospace industries. We are two of their partners, and saw an innovative project come to life via two organizations – KTH and Fraunhofer SCAI.

We wanted to improve reliable uncertainty estimates for statistics in turbulence simulation, as well as collecting, processing and storing the time-series data efficiently. Remember: sample-mean estimators (SMEs) are uncertain because they are computed from a finite number of samples. The lower the SMEs’ variance, the more they could be trusted.

The challenges

- Regular strategies for measuring uncertainty in time-averages aren’t optimal and can be inaccurate.

- In batch-based methods for estimating time-averaging uncertainties, it’s rarely certain whether the batch of data you’re analyzing is large enough for dependable accuracy. You have to decide on the size before the simulation launches. It’s a guessing game which is not appropriate for an in-situ uncertainty estimation.

- So, it’s imperative to model the autocorrelation function (ACF). But the entire time-series must be brought into play. This is a massive amount of variable data for large-scale simulations to manage.

- Another big challenge? Storing the CFD data. Databanks on this level soak up significant processing power and disk space.

The solution

- UQit provides us with an in-situ data analysis toolbox. We use it to accurately estimate time-averaging uncertainties in CFD.

- Our framework consists of low-storage updating UQ algorithms, adapters for sampling from a CFD solver, and interfaces linking the CFD solver and UQit.

- Instead of shooting in the dark for appropriate batch sizes, we model the ACF of time-series while a simulation is running with a tiny memory requirement. The CFD solver generates data as the simulation proceeds – utilizing the available memory of the HPC system.

- We came up with an in-situ modeled ACF (IMACF) approach for UQ based on in-situ modeling of the ACF. It boosts the accuracy of estimates for the uncertainty attached to time-averaged values.

- The framework can be applied to any CFD solver running on any computing system with a ParaView Catalyst interface.

The results

- Significant reduction of data I/O during and after the simulation (down to less than 1%).

- Intermediate UQ results analyzed while the simulation is active.

- Tiny computational overhead (lower than 5%) to maintain the UQ process.

- Far more flexibly defined regions of interest in the flow domain, broadening the UQ techniques applies to different flow quantities.

The KTH/Fraunhofer case is pertinent for solving the problem of in-situ estimation of uncertainties due to finite time-averaging in turbulence simulations. Learn more about the project – and EXCELLERAT in general – here.

Uncertainty in CFD simulations is unavoidable, and they have to be accurately quantified for the QoIs. Uncertainty quantification seeks to make your data more viable, trustworthy and free of avoidable uncertainties you may mistake for the results of a variable change.

Ideally, we want to reduce as many uncertainties as possible – but we’ll never get rid of them all. That’s why we need to estimate and evaluate them. A complex, large-scale experiment deserves an analysis that accounts for every variable as well as the efficacy of the data you’ve used to simulate them.

Although simulations such as accurate LES and DNS may suffer from less potential uncertainties compared to RANS, they use too much computational effort. UQit makes this problem less significant. You can dip into a growing library of turbulence flow modules and run extensive analyses that don’t break the bank or your processing capabilities.

The essential components to UQit are . . .

- Choose from an array of statistical and computational measuring methods, including GPR, PCE and GSA. Each of them provide an advantage for various parameters and digital environments. You can combine these in various ways driven by a need. In particular, PCE and GSA can be combined with GPR surrogates.

- Build a surrogate for the simulation’s outputs (that are potentially uncertain) in the input/parameter space. It uses algorithms to test all of the parameters you want to observe. UQ analyses can be applied to the surrogates that demand less computing power and memory.

- Use in-situ framework to access time-averaged uncertainty on the fly. You can do this with very large simulations, measuring the time between changes that occur in each value, because you’re processing the simulation output while it’s still in the HPC system’s memory.

- Note that UQit will be continuously developed. The procedure for development is i) characterize a need, ii) design UQ techniques and algorithms, iii) implement techniques, test them, and create documentation. The process will be described in academic publications. Once the publication(s) are published, the results of steps ii) and iii) will be released in open-source.

We’ve already mentioned that UQit is in its infancy with regards to existing modules and malleability. Open-source coders are going to break its potential ever wider for CFD and UQ. There’s no telling what this technology will look like in five, 10 or 20 years from now; a promising evolution that your organization should keep pace with.

A high-powered computing partner, like us, isn’t just necessary for getting your team off the ground with UQit. We’ll help you innovate, expand and lead the field for precise CFD modeling. Uncertainties will be measured and dealt with more quickly and accurately. New simulated environments will be ready for testing. You can follow any engineering capability down fresh avenues of research, knowing that UQ is one less thing to worry about.

Sign up to EXELLERAT’s self-serve portal for assistance with your data models and engineering projects.

Or if you’d rather get hands-on with UQit today, visit this open-source link.